In a video from the early 1950s, Bell Labs scientist Claude Shannon demonstrates one of his new inventions: a toy mouse named Theseus that looks like it could be a wind-up. The gaunt Shannon, looking a bit like Gary Cooper, stands next to a handsomely crafted tabletop maze and explains that Theseus (which Shannon pronounces with two syllables: “THEE-soose”) has been built to solve the maze. Through trial and error, the mouse finds a series of unimpeded openings and records the successful route. On its second attempt, Theseus follows the right path, error-free from start to finish.

Shannon then unveils the secret to Theseus’s success: a dense array of electrical relays, sourced from the Bell System’s trove of phone-switching hardware. It is the 1950s equivalent of a computer chip, but it’s about a thousand times bigger and only a millionth as powerful as today’s hardware.

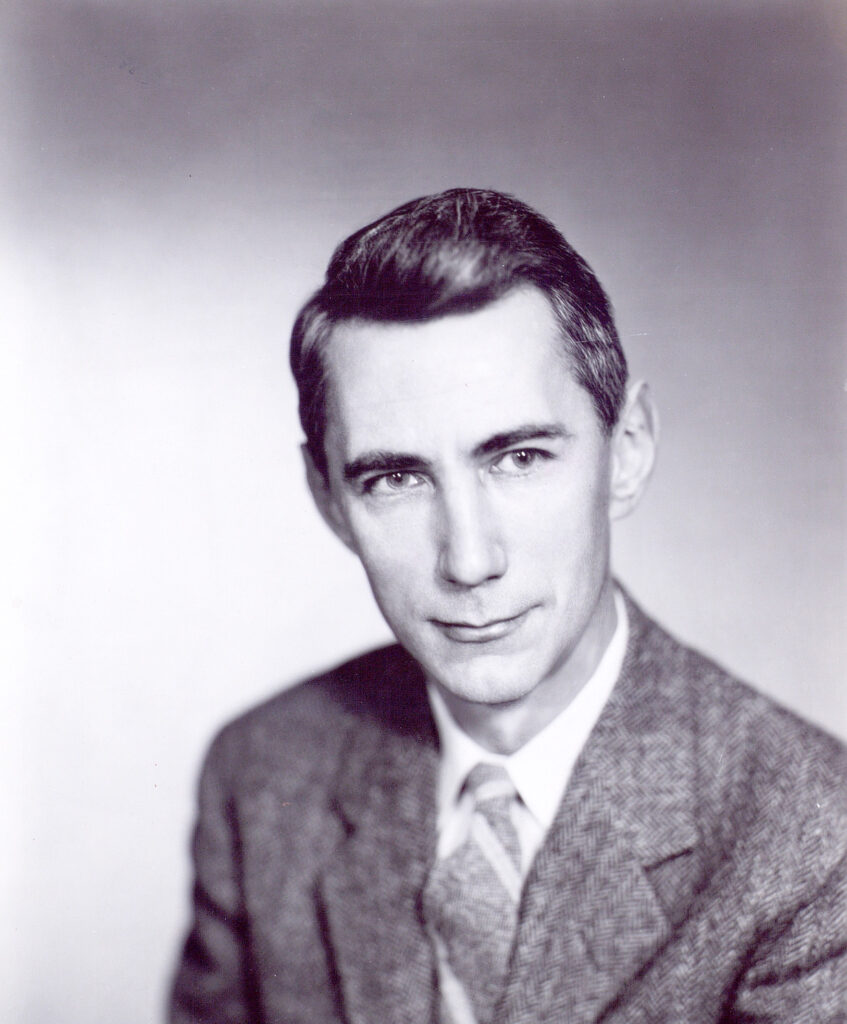

Claude Shannon’s achievements were at the level of an Einstein or a Feynman, but he has not achieved commensurate fame.

While some scientists and engineers may have recognized Theseus as something important—a tidy and clever example of a thinking machine—many in Shannon’s audience probably dismissed the contraption as a fancy wind-up toy, or maybe a fraudulent automaton in the tradition of the chess-playing Turk.

But the intellect behind Theseus was prodigious. Of the computer pioneers who drove the mid-20th-century information technology revolution—an elite men’s club of scholar-engineers who also helped crack Nazi codes and pinpoint missile trajectories—Shannon may have been the most brilliant of them all. His achievements were at the level of an Einstein or a Feynman, but Shannon has not achieved commensurate fame. It’s possible his playful tinkering caused some to write him off as unserious. But it’s also possible that his greatest work seemed unapproachable to most.

Shannon’s seminal work was profoundly abstract. As the “father of information theory,” he took the bold step of divorcing information from meaning, conceiving of messages as just collections of bits, devoid of an explicit connection to the world. In many ways his work is not only counterintuitive, but dismal and remote.

A new Shannon biography, A Mind at Play: How Claude Shannon Invented the Information Age, may help reverse this legacy. Authors Jimmy Soni and Rob Goodman make a strong bid to expose Shannon’s work to a popular audience, balancing a chronological narrative, the “Eureka!” moments that sprang from his disciplined approach to solving puzzles, and his propensity for playfulness. The book begins, for example, in the 1920s, when a young Shannon electrified the fence around his small-town Michigan home to transform it into a telegraph wire. By the end of the century, we find Shannon playing tour guide to a parade of MIT students at his suburban Boston home, a virtual museum of homemade gadgets and toys.

In between, Soni and Goodman sink their teeth into Shannon’s two greatest achievements. First, Shannon is likely the reason that almost all computers today are digital. In the 1930s, computer pioneers were essentially using watchmaking techniques to refine the wheels and cogs of hulking analog difference engines—“one of engineering’s long, blind alleys,” in Soni and Goodman’s words. Shannon set computer science definitively on the digital track with what is often called the most influential Master’s thesis ever.

His 1937 MIT thesis, completed at age 21, demonstrated that the on-off switches of digital devices could be represented with the true-false notation developed eighty years prior by the English logician George Boole. Shannon single-handedly imported Boolean algebra into the task of electronic circuit design, radically streamlining the process and sealing off the blind alley of analog design once and for all.

Shannon took the bold step of divorcing information from meaning, conceiving of messages as just collections of bits, devoid of an explicit connection to the world.

Shannon may also be the reason that modern communication has progressed from the fritzy TV pictures of the 1950s to a civilization saturated with high-speed and ubiquitous multimedia data. Shannon’s crowning academic achievement, his 1948 publication of “A Mathematical Theory of Communication,” immediately found an audience among engineers looking to send messages faster and with greater fidelity. The paper’s deep analysis of messages—their information content, how that content can be converted to signals sent through a communication channel and then received intact at the end—provided the principles and lexicon for the transmission of all kinds of information, everywhere. Even if the names of the technologies (e.g. data compression, channel optimization, and noise reduction) mean nothing to you, you rely on them when you make a phone call, binge-watch a Netflix series, or tweet.

But while information theory’s offspring have been plentiful, its pure form has no obvious calling card. It’s too intangible; the essence of information theory is in its least practical aspects. As such, it is the perfect expression of Shannon’s gift for abstraction. Soni and Goodman write that Shannon “had a way of getting behind things. He loved the objects under his hands, right up to the point when he abstracted his way past them.”

As Shannon began work on information theory, he faced mid-century problems: making and breaking codes, sending messages intact over long distances through wires and through the air, and building a common phone network that could connect anyone to anyone. At the time, Soni and Goodman write, “Information was a presence offstage.” Shannon’s goal was to unite the many disparate problems of information under one comprehensive solution.

He delivered in 1948, after a solid decade of solitary “tooling” (in the parlance of MIT-bred engineers). His math had expanded to a consistent and complete system, applicable to every form of message transmitted through every possible communication channel. Shannon’s achievement in information theory was comparable to what Euclid’s Elements had done for geometry.

To make a rigorous and technical solution possible, Shannon constrained the problem. At the outset, he declared that meaning was “irrelevant to the engineering problem.” It would simply be too hard to evaluate successful transmission while taking into account all the “correlated. . . physical or conceptual entities” that make up a message’s meaning. He reduced the act of sending a message to selecting among finite possibilities and replicating the selection at the other end. This made accuracy measurable—simply compare the received message to the original.

Information theory corresponds elegantly to the human elements of communication.

For his working example, Shannon chose the English language. By using English, Shannon was able to appeal to readers’ intuition about what was sensible and what was nonsense. Although sense and nonsense were irrelevant under his “no meaning” stipulation, they were good intuitive proxies for accurate/inaccurate. The choice of English also opened up the language’s entire history as a literary database for both analytical and empirical information about how letters were used—their frequencies, as well as the patterns and frequencies of word combinations. Those statistics were an essential part of his model.

Shannon needed an atomic unit of information, so he created one. Harkening back to Boole, Shannon reduced letters, images and sounds to bits—strings of ones and zeros. Once a message was reduced to bits, the mathematical relationships began to emerge. A message delivered in text could be measured for its contribution to the recipient’s existing knowledge—or, put differently, its ability to resolve uncertainty. In information theory, that’s “information.”

Following Euclid’s model of axioms and postulates, Shannon went about the task of defining information theory’s elements and their roles in the system. For example, “redundancy”—predictable or even repetitive strings of bits—could either be dead weight in a message or counterweight to a garbled message unintelligible without repetition. Soni and Goodman relate how early transatlantic telegraphy, due to the distortion created by primitive underwater cables, often devolved into either long sequences of the same word over again, or requests for more redundancy—“Repeat, please.” (The authors describe a scene of “communication about communication, telegraphy as an especially bleak Samuel Beckett play.”)

Shannon then observed that this complex system, dynamic and variable but governed by parameters, could be characterized as a Markov process. In a nutshell, Markov processes are random, but their patterns depend on their current state. For example, the next letter in an English sentence is random but depends on the current letter—“u” is very likely after “q.” This observation availed communication of the rich analytical toolkit already in use with Markov processes and present in real-world phenomena, such as stock price movements, population growth, and queues for ice cream.

Just as measures of length, area, and volume are fundamental to Euclid’s geometry, the actual measure of information in a message was an essential building block for information theory. The units (bits) were defined, but how would Shannon go about determining the total bits in a message? Making a leap reminiscent of his Boolean insight, Shannon imported a concept from thermodynamics. In information theory, he argued, the amount of information in a message is its “entropy.”

In thermodynamics, the attributes of a system (such as temperature, volume, and energy) define its state. We may know a thermodynamic state has all of the above attributes but not know their values. Similarly, we may know a message uses a certain number of letters but not know which ones. In both cases, entropy measures the expected value of that state (or message). A very attribute-rich thermodynamic state and a very elaborate message both have high entropy.

If you want to see the tools of information theory at work, consider this:

The Kindle e-book of Mario Puzo’s The Godfather is about a million bytes. I downloaded a 35,000-byte picture of Marlon Brando as Vito Corleone. So, since the book is about 172,000 words, or 7.5 bytes per word, that makes the picture of Vito worth almost 5,000 words.

For the record, the Word file of this review is about 35,000 bytes, the same size as the Vito Corleone photo. (When you’re writing about communication, the air grows thick with meta very quickly.)

Turning away from meaning was a pragmatic decision that led to Shannon’s greatest triumph. It was also a kind of trick.

Having built a construct as complete and powerful as information theory, Shannon gently made a point of noting the barriers to replicating his act. The “no meaning” caveat, in addition to making the math work, also challenged those following Shannon to match his standards of analytical rigor—and generally, to not overthink things.

Many did not get the message. After the release of Shannon’s 1948 paper, sojourners from all disciplines projected their own problems onto the blank canvas of information theory. (Soni and Goodman sum up the public’s overly effusive response to information theory in a chapter titled “TMI.”) Perhaps this was to be expected; in some respects, every scholar traffics in “information.” And despite his caveats, Shannon left the door ajar for cross-pollination by reaching into thermodynamics for the framework of entropy. But when interlopers didn’t take his cues about the necessity of rigor, he spelled it out for them.

In his 1956 essay “The Bandwagon,” Shannon wrote, “The establishing of [new] applications is not a trivial matter of translating words to a new domain, but rather the slow tedious process of hypothesis and experimental verification.”

Shannon rejected most of the new applications of information theory, but there was one exception. In the 1950s, he advised John L. Kelly Jr.—a younger member of the Bell Labs-MIT men’s club—on a paper linking information theory to gambling. Kelly noticed the mathematical similarities between the processes of deciding how much to bet on a given risk and determining the amount of information that can be successfully transmitted over a noisy channel. The paper laid out what is now known in finance theory as the Kelly Criterion, a rule for allocating capital to risky propositions, whether at the blackjack table or in the stock market.

In the interest of keeping their narrative manageable and centered on its subject, Soni and Goodman limit discussions of information theory’s extensions. But entropy and Kelly provide seductive examples, and it’s worth letting out the leash a bit to see where the extensions might run.

Information scholars are currently following the lead of physics, exploring beyond classical assumptions about the state of a communication system and incorporating concepts from quantum mechanics. Instead of using bits that resolve to one of two binary states, quantum information processing considers the possibility of information having multiple states that can be superposed. (The resulting quantum information measure is known as a “qubit.”) “Quantum Shannon theory” posits the existence of efficiencies (such as data compression or noise reduction techniques) that could apply to processes in the quantum world.

Shannon had a way of getting behind things. He loved the objects under his hands, right up to the point when he abstracted his way past them.

Are there other phenomena that could be understood and described using the template provided by information theory?

Maybe. In 1961, the Cambridge physiologist H. B. Barlow wrote a paper on how nervous systems may have evolved to encode and deliver messages with maximum efficiency through an organism’s nervous system. Barlow termed his model “the efficient coding hypothesis,” evoking Eugene Fama’s “efficient-market hypothesis.”

Fama’s work gave the world an analytical toolkit and lexicon for financial market risk and return. The parallels between information and economics identified by Kelly open the question of whether the concepts should complete the round-trip from Shannon to Kelly and back to Shannon. The information theory equivalents of financial concepts like risk, return, volatility, and the Sharpe Ratio could offer insight and discipline to some forms of communication.

Turning away from meaning was a pragmatic decision that led to Shannon’s greatest triumph. At the same time, it was a kind of trick, a litigator’s crafty courtroom maneuver. Shannon approached the bench at the beginning of the trial and had the judge declare any references to “meaning” inadmissible. This enabled him to win the case at hand—the engineering problem—but left unfulfilled the promise implied by the name “information theory.”

Without meaning, information theory can solve the engineering problem—but only the engineering problem. Granting engineering problems primacy in questions of meaning, however, seems like a hasty, ignominious surrender. We should at least wait to size up our coming mechanical overlords by taking stock of how current advances in artificial intelligence and machine learning play out. Soni and Goodman write that the rejection of meaning “formalized an intuition wired into the phone company—which was, after all, in the business of transmission, not interpretation.” It’s hard to avoid grim images of pale kings locked in bureaucracies executing menial tasks.

Shannon clearly did not envision that kind of future for information theory. Smack in the middle of his 1948 paper, in a statement that almost serves as a giant asterisk, Shannon turned to James Joyce:

Two extremes of redundancy in English prose are represented by Basic English and by James Joyce’s book Finnegans Wake. The Basic English vocabulary is limited to 850 words and the redundancy is very high. This is reflected in the expansion that occurs when a passage is translated into Basic English. Joyce on the other hand enlarges the vocabulary and is alleged to achieve a compression of semantic content.

Nowhere else in the paper does Shannon use the phrase “semantic content,” nor does he again suggest that some version of data compression can apply to it. In the paper’s second paragraph, fourteen pages earlier, he had already ruled out meaning. Yet it is impossible to conclude that this comment, referencing possibly the least-mechanical English-language writer of all, was anything but intentional. While winning his court case for a meaning-free information theory, Shannon left the door open for appeals.

Theoretical approaches to meaning, in fact, do abound. Contemporary literary criticism, for example, has made many Shannon-inspired appropriations of scientific concepts, as have Saussure’s semiotics, Derrida’s deconstructionism, a wide range of philosophical linguistics, and the more culturally oriented realm of media studies associated with Marshall McLuhan.

Despite Shannon’s admonitions, the hard scientists and engineers have also forged ahead into meaning.

One was Warren Weaver. Weaver was a scholar known for republishing Shannon’s 1948 paper with a less daunting, less math-y introduction, but around the same time, he also began making pivotal breakthroughs in machine translation. By definition, translation is a task that requires more than the mere replication of a message. Weaver’s approach embraced Shannon-inspired ideas about how to use word clusters and universal language elements to improve the power and accuracy of a translation. Weaver once wrote about a hack that would help prepare verbal inputs to make them digestible for even early computers:

“It is, of course, true that Basic [English] puts multiple use on an action verb such as ‘get.’ But, even so, the two-word combinations such as ‘get up,’ ‘get over,’ ‘get back,’ etc., are, in Basic, not really very numerous. Suppose we take a vocabulary of 2,000 words, and admit for good measure all the two-word combinations as if they were single words. The vocabulary is still only four million: and that is not so formidable a number to a modern computer, is it?”

Theseus, the toy mouse, was itself a harbinger of information theory’s expanding borders. It did more than just send or receive information about its maze. It sought information out and then used it to find the right path, Theseus identified the relationships among “certain physical or conceptual entities” that Shannon considered “meaning.”

In considering meaning, the humanists and the scientists are heading toward the same destination. In a recent interview, A Mind at Play co-author Rob Goodman noted information theory’s potential to unify the two tribes:

Shannon’s life and work really called into question the whole “two cultures” paradigm, that math and science and the humanities on the other hand have very little to say to each other. . . . What Shannon was doing was not all simply hard math. It was thinking about problems that really, at the same time, consumed people in linguistics and philosophy as well.

Could information theory open a channel between the math-science tribe and the humanities tribe? Or perhaps between people and machines?

Consider Facebook’s recent decision to shut down Bob and Alice, two artificially intelligent chatbots. The bots were trained in English, but suddenly became fluent in a pidgin understandable only to each other. “I can i i everything else,” for example, was a phrase Bob used to negotiate with Alice about how to split up a task.

Some observers framed the occasion as a harbinger of the “technological singularity,” in which “superintelligent” machines develop the ability to improve themselves—and, so the story goes, take over the world. The concern is certainly legitimate, but in a calmer moment, it’s also interesting to consider how these bots mirror human behavior, and what that may say.

What was Bob’s comment if not jargon? It is reminiscent of the transformations that human language has undergone at the hand of text messaging (IMHO). When we use jargon, acronyms, metaphors—and adding more dimensions, stories and multimedia—isn’t data compression the goal?

Despite an apparent cultural chasm between bibliophiles and technophiles, the engineering concepts of Claude Shannon’s information theory correspond elegantly to the more human elements of communication. If we develop that thought further, information theory may have a role to play as a rubric for good communication—one that cuts across at least two cultures.