What We Owe the Future

William MacAskill

Basic Books, $32 (cloth)

“Space is big,” wrote Douglas Adams in The Hitchhiker’s Guide to the Galaxy (1979). “You just won’t believe how vastly, hugely, mind-bogglingly big it is. I mean, you may think it’s a long way down the road to the chemist’s, but that’s just peanuts to space.”

Time is big, too—even if we just think on the timescale of a species. We’ve been around for approximately 300,000 years. There are now about 8 billion of us, roughly 15 percent of all humans who have ever lived. You may think that’s a lot, but it’s just peanuts to the future. If we survive for another million years—the longevity of a typical mammalian species—at even a tenth of our current population, there will be 8 trillion more of us. We’ll be outnumbered by future people on the scale of a thousand to one.

What we do now affects those future people in dramatic ways: whether they will exist at all and in what numbers; what values they embrace; what sort of planet they inherit; what sorts of lives they lead. It’s as if we’re trapped on a tiny island while our actions determine the habitability of a vast continent and the life prospects of the many who may, or may not, inhabit it. What an awful responsibility.

This is the perspective of the “longtermist,” for whom the history of human life so far stands to the future of humanity as a trip to the chemist’s stands to a mission to Mars.

Oxford philosophers William MacAskill and Toby Ord, both affiliated with the university’s Future of Humanity Institute, coined the word “longtermism” five years ago. Their outlook draws on utilitarian thinking about morality. According to utilitarianism—a moral theory developed by Jeremy Bentham and John Stuart Mill in the nineteenth century—we are morally required to maximize expected aggregate well-being, adding points for every moment of happiness, subtracting points for suffering, and discounting for probability. When you do this, you find that tiny chances of extinction swamp the moral mathematics. If you could save a million lives today or shave 0.0001 percent off the probability of premature human extinction—a one in a million chance of saving at least 8 trillion lives—you should do the latter, allowing a million people to die.

Now, as many have noted since its origin, utilitarianism is a radically counterintuitive moral view. It tells us that we cannot give more weight to our own interests or the interests of those we love than the interests of perfect strangers. We must sacrifice everything for the greater good. Worse, it tells us that we should do so by any effective means: if we can shave 0.0001 percent off the probability of human extinction by killing a million people, we should—so long as there are no other adverse effects.

But even if you think we are allowed to prioritize ourselves and those we love, and not allowed to violate the rights of some in order to help others, shouldn’t you still care about the fate of strangers, even those who do not yet exist? The moral mathematics of aggregate well-being may not be the whole of ethics, but isn’t it a vital part? It belongs to the domain of morality we call “altruism” or “charity.” When we ask what we should do to benefit others, we can’t ignore the disquieting fact that the others who occupy the future may vastly outnumber those who occupy the present, and that their very existence depends on us.

From this point of view, it’s an urgent question how what we do today will affect the further future—urgent especially when it comes to what Nick Bostrom, the philosopher who directs the Future of Humanity Institute, calls the “existential risk” of human extinction. This is the question MacAskill takes up in his new book, What We Owe the Future, a densely researched but surprisingly light read that ranges from omnicidal pandemics to our new AI overlords without ever becoming bleak.

Like Bostrom, MacAskill has a big audience—unusually big for an academic philosopher. Bill Gates has called him “a data nerd after my own heart.” In 2009 he and Ord helped found Giving What We Can, an organization that encourages people to pledge at least 10 percent of their income to charitable causes. With our tithe, MacAskill holds, we should be utilitarian, aggregating benefits, subtracting harms, and weighing odds: our 10 percent should be directed to the most effective charities, gauged by ruthless empirical measures. Thus the movement known as Effective Altruism (EA), in which MacAskill is a leading figure. (Peter Singer is another.) By one estimate, about $46 billion is now committed to EA. The movement counts among its acolytes such prominent billionaires as Peter Thiel, who gave a keynote address at the 2013 EA Summit, and cryptocurrency exchange pioneer Sam Bankman-Fried, who became a convert as an undergraduate at MIT.

Effective Altruists need not be utilitarians about morality (though some are). Theirs is a bounded altruism, one that respects the rights of others. But they are inveterate quantifiers, and when they do the altruistic math, they are led to longtermism and to the quietly radical arguments of MacAskill’s book. “Future people count,” MacAskill writes:

There could be a lot of them. We can make their lives go better. This is the case for longtermism in a nutshell. The premises are simple, and I don’t think they’re particularly controversial. Yet taking them seriously amounts to a moral revolution.

The premises are indeed simple. Most people concerned with the effects of climate change would accept them. Yet MacAskill pursues these premises to unexpected ends. If the premises are true, he argues, we should do what we can to ensure that “future civilization will be big.”

MacAskill spends a lot of time and effort asking how to benefit future people. What I’ll come back to is the moral question whether they matter in the way he thinks they do, and why. As it turns out, MacAskill’s moral revolution rests on contentious, counterintuitive claims in “population ethics.”

When it comes to the how, MacAskill is fascinating—if, at times, alarming. Since having a positive influence on the long-term future is “a key moral priority of our time,” he writes, we need to estimate what influence our actions will have. It is difficult to predict what will happen over many thousands of years, of course, and MacAskill doesn’t approach the task alone: his book, he tells us, relies on a decade of research, including two years of fact-checking, in consultation with numerous “domain experts.”

The long-term value of working for a given outcome is a function of that outcome’s significance (what MacAskill calls its “average value added”), its persistence or longevity, and its contingency—the extent to which it depends on us and wouldn’t happen anyway.

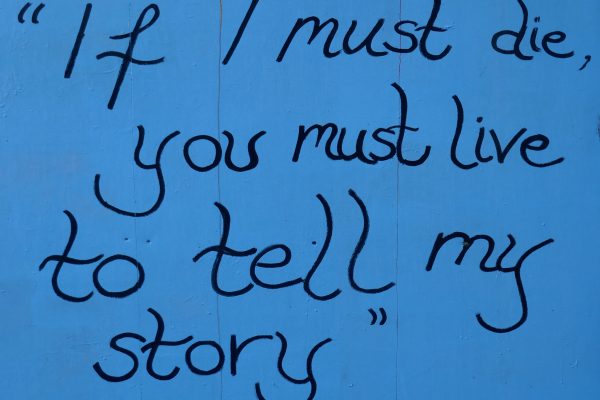

Among the most significant and persistent determinants of life for future generations, MacAskill argues, are the values we pass on to them. And values are often contingent. MacAskill takes as a case study the abolition of slavery in the nineteenth century. Was it, he asks, “more like the use of electricity—a more or less inevitable development once the idea was there?” or “like the wearing of neckties: a cultural contingency that became nearly universal globally but which could quite easily have been different?” Slavery had been abolished in Europe once before, in the late middle ages, only to return with a vengeance. Was it destined to decline again? MacAskill cites historian Christopher Leslie Brown, writing in Moral Capital (2006): “In key respects the British antislavery movement was a historical accident, a contingent event that just as easily might never have occurred.” Values matter to the long-term future, and they are subject to intentional change.

From here we lurch to the alarming: MacAskill is worried about the development of artificial general intelligence (AGI), capable of performing as wide a range of tasks as we do, at least as well or better. He rates the chances of AGI arriving in the next fifty years no lower than one in ten. The risk is that, if AGI takes over the world, its creators’ vision may be locked in for a very long time. “If we don’t design our institutions to govern this transition well—preserving a plurality of values and the possibility of desirable moral progress,” MacAskill writes, “then a single set of values could emerge dominant.” The results might be dystopian: What if the AGI that rules the world belongs to a fascist government or a rapacious trillionaire?

MacAskill calls for better regulation of AI research to preserve space for reflection, open-mindedness, and political experimentation. Most of us would not object. But, as is often the case in discussions of AI—and despite the salience of contingency—MacAskill tends to treat the progress of technology as a given. We can hope to govern the transition to AGI well, but the transition is certainly coming. What we do “could affect what values are predominant when AGI is first built,” MacAskill notes—but not whether it is built at all. Like the philosopher Annette Zimmermann, I hope that isn’t true.

MacAskill may be reconciled to AGI, himself, by the hope that it will address another long-term problem: the threat of economic and technological stagnation. Again, his argument is both fascinating and alarming. “For the first 290,000 years of humanity’s existence,” MacAskill writes, “global growth was close to 0 percent per year; in the agricultural era that increased to around 0.1 percent, and it accelerated from there after the Industrial Revolution. It’s only in the last hundred years that the world economy has grown at a rate above 2 percent per year.” But it can’t go on forever: “if current growth rates continued for just ten millennia more, there would have to be ten million trillion times as much output as our current world produces for every atom that we could, in principle, access”—that is, for every atom within ten thousand light years of Earth. “Though of course we can’t be certain,” MacAskill drily concludes, “this just doesn’t seem possible.”

There is evidence that technological process has already slowed down outside the areas of computation and AI. The rate of growth in “total factor productivity”—our ability to get more economic output from the same input—is declining, and according to a recent study by economists at Stanford and the London School of Economics, new ideas are increasingly scarce. MacAskill illustrates this neatly, imagining how your life would change in fifty years. When you go from 1870 to 1920, you get running water, electricity, a telephone, and perhaps a car. When you go 1970 to 2020, you get a microwave oven and a bigger TV. The only dramatic shifts are in computing and communications. Without the magic bullet of AGI, through which we might build unlimited AI workers in R&D, MacAskill fears that we are doomed to stagnation, perhaps for hundreds or thousands of years. But from his perspective, if it’s not permanent, and people don’t wish they’d never been born, it’s not so bad. The worst things about stagnation, for MacAskill, are the dangers of misguided value lock-in and of extinction or permanent collapse.

Here we come, at last, to existential risks: asteroid collisions, which we might not detect; lethal pandemics, which might be bioengineered; World War III, which might turn nuclear; and climate change, which might accelerate through feedback in the climate system. MacAskill applauds NASA’s Spaceguard program, calls for better pandemic preparedness and biotech safety (experts, he notes, “put the probability of an extinction-level engineered pandemic this century at around 1 percent”), and supports a rapid shift to green energy.

But what is most alarming in his approach is how little he is alarmed. As of 2022, the Bulletin of Atomic Scientists set the Doomsday Clock, which measures our proximity to doom, at 100 seconds to midnight, the closest it’s ever been. According to a study commissioned by MacAskill, however, even in the worst-case scenario—a nuclear war that kills 99 percent of us—society would likely survive. The future trillions would be safe. The same goes for climate change. MacAskill is upbeat about our chances of surviving seven degrees of warming or worse: “even with fifteen degrees of warming,” he contends, “the heat would not pass lethal limits for crops in most regions.”

This is shocking in two ways. First, because it conflicts with credible claims one reads elsewhere. The last time the temperature was six degree higher than preindustrial levels was 251 million years ago, in the Permian-Triassic Extinction, the most devastating of the five great extinctions. Deserts reached almost to the Arctic and more than 90 percent of species were wiped out. According to environmental journalist Mark Lynas, who synthesized current research in Our Final Warning: Six Degrees of Climate Emergency (2020), at six degrees of warming the oceans will become anoxic, killing most marine life, and they’ll begin to release methane hydrate, which is flammable at concentrations of five percent, creating a risk of roving firestorms. It’s not clear how we could survive this hell, let alone fifteen degrees.

The second shock is how much more MacAskill values survival in the long term over a decrease of suffering and death in the near future. This is the sharp end of longtermism. Most of us agree that (1) world peace is better than (2) the death of 99 percent of the world’s population, which is better in turn than (3) human extinction. But how much better? Where many would see a greater gap between (1) and (2) than between (2) and (3), the longtermist disagrees. The gap between (1) and (2) is a temporary loss of population from which we will (or at least may) bounce back; the gap between (2) and (3) is “trillions upon trillions of people who would otherwise have been born.” This is the “insight” MacAskill credits to the iconic moral philosopher Derek Parfit. It’s the ethical crux of the most alarming claims in MacAskill’s book. And there is no way to evaluate it without dipping our toes into the deep, dark waters of population ethics.

Population ethicists ask how good the world would be with a given population distribution, specified by the number of people existing at various levels of lifetime well-being throughout space and time. Should we measure by total aggregate well-being? By average? Should we care about distribution, rating inequitable outcomes worse? As MacAskill writes, population ethics is “one of the most complex areas of moral philosophy . . . normally studied only at the graduate level. To my knowledge, these ideas haven’t been presented to a general audience before.” But he gives it his best, and with trepidation, I’ll follow suit.

At the heart of the debate is what MacAskill calls “the intuition of neutrality,” elegantly expressed by moral philosopher Jan Narveson in a much-cited slogan: “We are in favour of making people happy, but neutral about making happy people.” The appeal of the slogan is apparent at scales both large and small. Suppose you are told that humanity will go extinct in a thousand years but also that everyone who lives will have a good enough life. Should you care if the average population each year is closer to 1 billion or 2? Neutrality says no. What matters is quality, not quantity.

Now suppose you are deciding whether to have a child, and you expect that your child would have a good enough life. Must you conclude that it would be better to have a child than not, unless you can point to some countervailing reason? Again, neutrality says no. In itself, adding an extra life to the world is no better (or worse) than not doing so. It’s entirely up to you. It doesn’t follow that you shouldn’t care about the well-being of your potential child. Instead, there’s an asymmetry: although it is not better to have a happy child than no child at all, it is worse to have a child whose life is not worth living.

Longtermists deny neutrality: they argue that it’s always better, other things equal, if another person exists, provided their life is good enough. That’s why human extinction looms so large. A world in which we have trillions of descendants living good enough lives is better than a world in which humanity goes extinct in a thousand years—better by a vast, huge, mind-boggling margin. A chance to reduce the risk of human extinction by 0.01 percent, say, is a chance to make the world an inconceivably better place. It’s a greater contribution to the good, by several orders of magnitude, than saving a million lives today.

But if neutrality is right, the longtermist’s mathematics rest on a mistake: the extra lives don’t make the world a better place, all by themselves. Our ethical equations are not swamped by small risks of extinction. And while we may be doing much less than we should to address the risk of a lethal pandemic, value lock-in, or nuclear war, the truth is much closer to common sense than MacAskill would have us believe. We should care about making the lives of those who will exist better, or about the fate of those who will be worse off, not about the number of good lives there will be. According to MacAskill, the “practical upshot” of longtermism “is a moral case for space settlement,” by which we could increase the future population by trillions. If we accept neutrality, by contrast, we will be happy if we can make things work on Earth.

An awful lot turns on the intuition of neutrality, then. MacAskill gives several arguments against it. One is about the ethics of procreation. If you are thinking of having a child, but you have a vitamin deficiency that means any child you conceive now will have a health condition—say, recurrent migraines—you should take vitamins to resolve the deficiency before you try to get pregnant. But then, MacAskill argues, “having a child cannot be a neutral matter.” The steps of his argument, a reductio ad absurdum, bear spelling out. Compare having no child with having a child who has migraines, but whose life is still worth living. “According to the intuition of neutrality,” MacAskill writes, “the world is equally good either way.” The same is true if we compare having no child with waiting to get pregnant in order to have a child who is migraine-free. From this it follows, MacAskill claims, that having a child with recurrent migraines is as good an outcome as having a child without. That’s absurd. In order to avoid this consequence, MacAskill concludes, we must reject neutrality.

But the argument is flawed. Neutrality says that having a child with a good enough life is on a par with staying childless, not that the outcome in which you have a child is equally good regardless of their well-being. Consider a frivolous analogy: being a philosopher is on a par with being a poet—neither is strictly better or worse—but it doesn’t follow that being a philosopher is equally good, regardless of the pay.

A striking fact about cases like the one MacAskill cites is that they are subject to a retrospective shift. If you are planning to have a child, you should wait until your vitamin deficiency is resolved. But if you don’t wait and you give birth to a child, migraines and all, you should love them and affirm their existence—not wish you had waited, so that they’d never been born. This shift explains what’s wrong with a second argument MacAskill makes against neutrality. Thinking of his nephew and two nieces, MacAskill is inclined to say that the world is “at least a little better” for their existence. “If so,” he argues, “the intuition of neutrality is wrong.” But again, the argument is flawed. Once someone is born, you should welcome their existence as a good thing. It doesn’t follow that you should have seen their coming to exist as an improvement in the world before they came into existence. Neutrality survives intact.

In rejecting neutrality, MacAskill leans toward the “total view” on which one population distribution is better than another if it has greater aggregate well-being. This is, in effect, a utilitarian approach to population ethics. The total view says that it’s always better to add an extra life, if the life is good enough. It thus supports the longtermist view of existential risks. But it also implies what is known as the Repugnant Conclusion: that you can make the world a better place by doubling the population while making people’s lives a little worse, a sequence of “improvements” that ends with an inconceivably vast population whose lives are only just worth living. Sufficient numbers make up for lower average well-being, so long as the level of well-being remains positive.

Many regard the Repugnant Conclusion as a refutation of the total view. MacAskill does not. “In what was an unusual move in philosophy,” he reports, “a public statement was recently published, cosigned by twenty-nine philosophers, stating that the fact that a theory of population ethics entails the Repugnant Conclusion shouldn’t be a decisive reason to reject that theory. I was one of the cosignatories.” But you can’t outvote an objection. Imagine the worst life one could live without wishing one had never been born. Now imagine the kind of life you dream of living. For those who embrace the Repugnant Conclusion, a future in which trillions of us colonize planets so as to live the first sort of life is better than a future in which we survive on Earth in modest numbers, achieving the second.

MacAskill has a final argument, drawing on work by Parfit and by economist-philosopher John Broome. “Though the Repugnant Conclusion is unintuitive,” he concedes, “it turns out that it follows from three other premises that I would regard as close to indisputable.” The details are technical, but the upshot is a paradox: the premises of the argument seem true, but the conclusion does not. As it happens, I am not convinced that the premises are compelling once we distinguish those who exist already from those who may or may not come into existence, as we did with MacAskill’s nephew and nieces. But the main thing to say is that basing one’s ethical outlook on the conclusion of a paradox is bad form. It’s a bit like concluding from the paradox of the heap—adding just one grain of sand is not enough to turn a non-heap into a heap; so, no matter how many grains we add, we can never make a heap of sand—that there are no heaps of sand. This is a far cry from MacAskill’s “simple” starting point.

Nor does MacAskill stop here; he goes well beyond the Repugnant Conclusion. Since it’s not just human well-being that counts, for him, he is open to the view that human extinction wouldn’t be so bad if we were replaced by another intelligent species, or a civilization of conscious AIs. What matters to the longtermist is aggregate well-being, not the survival of humanity.

Nonhuman animals count, too. Though their capacity for well-being varies widely, “we could, as a very rough heuristic, weight animals’ interests by the number of neurons they have.” When we do this, “we get the conclusion that our overall views should be almost entirely driven by our views on fish.” By MacAskill’s estimate, we humans have fewer than a billion trillion neurons altogether, whereas wild fish have three billion trillion. In the total view, they matter three times as much as we do.

Don’t worry, though. We shouldn’t put their lives before our own, since there is reason to believe their lives are terrible. “If we assess the lives of wild animals as being worse than nothing on average, which I think is plausible (though uncertain),” MacAskill writes, “we arrive at the dizzying conclusion that from the perspective of the wild animals themselves, the enormous growth and expansion of Homo sapiens has been a good thing.” That’s because human growth and expansion are sparing them from all that misery. From this perspective, the anoxic oceans of six-degree warming come as a merciful release.

In Plato’s Republic, prospective philosopher-kings begin their education in dialectic or abstract reasoning at age thirty, after years of gymnastics, music, and math. At thirty-five, they are assigned jobs in the administration of the city, like minor civil servants. Only at the age of fifty do they turn to the Good itself, leaving the cave of political life for the sunlight of philosophy, a gift they repay by deigning to rule.

MacAskill is just thirty-five. But like a philosopher-king, he follows the path of dialectic from the shadows of convention to the blazing glare of a new moral vision, returning to the cave to tell us some of what he’s learned. MacAskill calls the early Quaker abolitionist Benjamin Lay “a moral entrepreneur: someone who thought deeply about morality, took it very seriously, was utterly willing to act in accordance with his convictions, and was regarded as an eccentric, a weirdo, for that reason.” According to MacAskill: “We should aspire to be weirdos like him.”

MacAskill styles himself as a moral entrepreneur too. His goal is to build a social movement, to win converts to longtermism. After all, if you want to make the long-term future better, and our values are among the most significant, persistent, contingent determinants of how it will go, there is a longtermist case for working hard to make a lot of us longtermists. For all I know, MacAskill may succeed in this. But as I’ve argued, the truth of his moral outlook—which rejects neutrality and gives no special weight to human beings—is a lot less clear than the injustice of slavery.

To his credit, MacAskill admits room for doubt, conceding that he may be wrong about the total view in population ethics. But he also has a view about what to do when you’re not sure of the moral truth: assign a probability to the truth of each moral view, “then take the action that is the best compromise between those views—the action with the highest expected value.” This raises problems of both theory and practice.

In practice, there is a threat that longtermist thinking will dominate expected value calculations in the same way as tiny risks of human extinction. If there is even a 1 percent chance of longtermism being true, and it tells us that reducing existential risks is many orders of magnitude more important than saving lives now, these numbers may swamp the prescriptions of more modest moral visions.

The theoretical problem is that we ought to be uncertain about this way of handling moral uncertainty. What should we do when uncertainty goes all the way down? At some point, we fall back on moral judgment and face what philosophers have called the problem of “moral luck.” What we ought to do, whatever our beliefs, is to act in accordance with the moral truth of how to act with those beliefs. There’s no way to insure ourselves against moral error—to guarantee that, while we may have made mistakes, at least we acted as we should, given what we believed. For we may be wrong about that, too.

There are profound divisions here, not just about the content our moral obligations but about the nature of morality itself. For MacAskill, morality is a matter of detached, impersonal theorizing about the good. For others, it is a matter of principles by which we could reasonably agree to govern ourselves. For still others, it’s an expression of human nature. At the end of his book, MacAskill includes a postscript, titled “Afterwards.” It is a fictionalized version of how the future might go well, from the perspective of longtermism. After making plans to colonize space, MacAskill’s utopians pause to think about how.

There followed a period of extensive discussion, debate, and trade that became known, simply, as the Reflection. Everyone tried to figure out for themselves what was truly valuable and what an ideal society would look like. Progress was faster than expected. It turned out that moral philosophy was not intrinsically hard; it’s just that human brains are ill-suited to tackle it. For specially trained AIs, it was child’s play.

I think this vision is misguided, and not just because there are serious arguments against space colonization. Moral judgment is one thing; machine learning is another. Whatever is wrong with utilitarians who advocate the murder of a million for a 0.0001 percent reduction in the risk of human extinction, it isn’t a lack of computational power. Morality isn’t made by us—we can’t just decide on the moral truth—but it’s made for us: it rests on our common humanity, which AI cannot share.

What We Owe the Future is an instructive, intelligent book. It has a lot to teach us about history and the future, about neglected risks and moral myopia. But a moral arithmetic is only as good as its axioms. I hope readers approach longtermism with the open-mindedness and moral judgment MacAskill wants us to preserve, and that its values are explored without ever being locked in.